4. AI & Virtual Transness

Image Recognition and Machine Learning

Beyond the textual and numeric data we can find in online databases, there is also a massive amount of additional info we can extract from images and videos themselves. We can use popular services like Google Vision to quickly find out how some of the largest tech companies are scanning through media to determine their contents. However, the ethics of should we are complex. The problem with testing these services is that is essentially requires one to use some sort of large corporate Cloud service--that's how you get access to the computing power and massive training datasets. I've tested local image recognition models like Yolo via Python, but it was too general (I've yet to test the Yolo9000 model, though). Testing with Google Vision likely will prove most useful in understanding porn sites' practices given it's wide tech-industry usage.

For quick testing, you can simply drag and drop images into the demo on their site. But to go beyond this, you'll likely need a dataset comprised of some series of images and the Vision API. You can use this for "free" on their site, since you're provided with enough free credits to pretty quickly get a project off the ground. You can then export the recognition data as JSON or pass it into a CSV via Google Sheets.

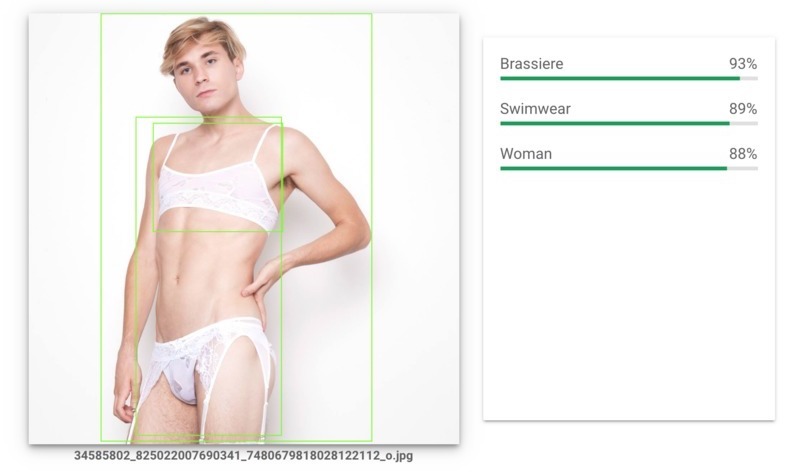

My initial idea was to set up a quick controlled test using their demo site. I wanted to see how the basic vision algorithm handled labeling trans bodies. I used public PornHub images of a few trans models in progressive states of undress as well as some advertising photos from popular trans lingerie companies. This acted as a pretty solid method for seeing how much clothing, chest-regions, & genitalia played into labeling as well as flagging as "adult" content.

What I found from some preliminary testing was pretty fascinating: Google Vision, in its standard demo form, was in some cases, separately gendering and "sexing" (i.e. genitalia assignment) models. Essentially, if it saw a trans woman in lingerie, it would throw "woman" and then if that same woman was then bottom-less, and had a penis, it would throw "male." Similarly, when shown a shirtless trans man it would throw "man" but when that trans man's underwear was removed, it would throw "female." And yet it never threw male-woman or female-man combinations. To get around a lot of confusion, it also always uses "bare-chested" instead of topless, shirtless, breasts, etc. and many times will just say "person" when it has no clue.

There are so many places to go with testing this technology, and I have barely scratched the surface, but this alone taught a really important lesson, which is that non-binary genders give computers a really tough time. While machine learning can be taught to look for patterns, it cannot "know" what performers are nonbinary & trans, it has to be explicitly taught what to look for by humans. Salty's recent algorithmic bias report showed, however, that Instagram clearly knows how to identify trans women and censor their "explicit" content.¹ That algorithm seems to use a combination of image recognition and user data to essentially figure out the most likely identity of the person in the photo and "appropriately" censor them. What most of these censorships come down to are nipples: and a nipple being a "breast" or a "peck" comes down to the individual's identity. When Instagram decides that this "bare-chested" person has "female nipples" they censor them. We need to consider the concept of “passing” in data. If you & your body “pass” as being those of a "woman," you are censored more frequently. If your genitals appear, you are censored, but what happens to your new data identity when that new information gets added to the system?

I honestly don't know. So in the future, I'd like to experiment with training my own dataset. It would likely illuminate the myriad of biases and inherent conflicts involved in the process, and is critical in understanding the very personal & human process that goes into training AI. And I think it's important to start studying, as companies like PornHub slowly gain the data to define what the "average" top-trending trans performers look like. This could shed light on the possible future of compound-generated virtual performers that frankly terrifies me. AI operates in binary of 0's & 1's (although some have referred quantum computing's lack of binary as "queering" computing), it is trained by humans, and will reproduce their same biases as humans--so we have to pay incredibly close attention not only to what tech companies are using these services for, but also to who is actually training them and the biases they hold.²

And on the topic of this training, the algorithms social media platforms use actually work to reinforce their own data sets, in some ways. For example, algorithmically, Instagram will serve up more photos of "body-shots" because they drive more engagement--this is why many performers will say things along the lines of "for the algorithm" when they post some sort of sexy body pose to announce creative works. This is an example of an algorithm feeding itself. It commodifies bodies to train users to post more photos of bodies in order to generate more likes and eyeballs on posts--and therefore drive more possible ad reach. These algorithms simultaneously encourage high quantities of "popular" (aka sexualized) content while strictly policing who can post it, and obfuscate their processes by gradually removing abilities to publicly monitor behavior, like by ceasing to show the number of likes posts recieves.³

Virtual Bodies & Coding Identity

Let's take a closer look at this idea of "compound-generated virtual performers" I mentioned. If you've never heard of Lil Miquela, or heard her popular single, you might think she's a real person--but she's not. She's the CGI product of a fairly mysterious company called Brud, who's only information comes in this form of a mysterious Google Doc and its owner's LinkedIn profiles. The company, however, has raised millions from silicon valley venture capitalists⁴ to support its three virtual models: Lil Miquela, Blawko, and Bermuda. Trevor McFedries is a person of color, and Co-founder Sara Decou is a woman, but that doesn't give some sort of PC pass on how problematic the idea of creating a fake human who is both queer and racialized really is. Lil Miquela even kissed Bella Hadid⁵ in a pretty uncomfortable attempt to monetize on virtual queerness (aka "digitally queerbait). They state these models are designed to make us question how we behave on social media, and they sponsor events for socially liberal groups, but I think it's a load of startup bullshit to absolve them from culpability. McFedries comes from a background in incubators, Spotify promotion, and music business production--there is monetary drive behind this project. I mean, these three avatars now have their own store that they model clothes from, this isn't some social experiment--it's a business.

Yes, I do love the idea of androgynous pansexual virtual characters in movies, stories, and personal avatars--but we need to be constantly interrogating who owns and profits from these virtual characters. Maybe this time it's a liberal LA group, but in the future, there's going to be far more different owners. And even amongst this "progressive" pocket, we have places like The Diigitals pop up where a white man creates a black woman to found an all-digital modeling agency--does that not throw up any red flags to you?⁶ It's one thing for a trans person to create a hyper-glam virtual avatar for themselves, and it's another from a company to create a person to represent them and profit for them based on other bodies. The reason I make such a fuss over this is because it has so much to do with the future of digital sex work. We know that tech investors (who may also be invested in the platforms these fake influencers post on) are helping to generate humans that do not have any relation to actual people, and we know that pornography has all the data one needs to figure out the most popular everything about a porn star--do you think this leads anywhere good for actual people? No, it leads straight to more profits in the hands of tech companies, and dwindling economic roles for actual performers.

Virtual Porn & Trans Bodies

I want you to imagine an end game for all these different techniques. If providers know what kind of porn you like, what kind of performers you like, alongside the music and products you like & what's in you inbox (yes, Google and Facebook track your porn)⁷, and they can create increasingly life-like virtual celebrities and increasingly realistic virtual porn—what do you think is going to end up served to you online? By using the same data on tags, titles, sex acts, ethnicity, scenes, etc. we’re finding here, combined with scanning through all the videos, it would be possible to generate pretty much the economically “ideal” video to get the most streams. I could grab the top tags and titles we found, alongside the most common hair color, ethnicity, etc. of top performers via image recognition, and spit out the most economically feasible new trans model. And this, I believe, is the next step in even further expanding the monopolized porn economy.

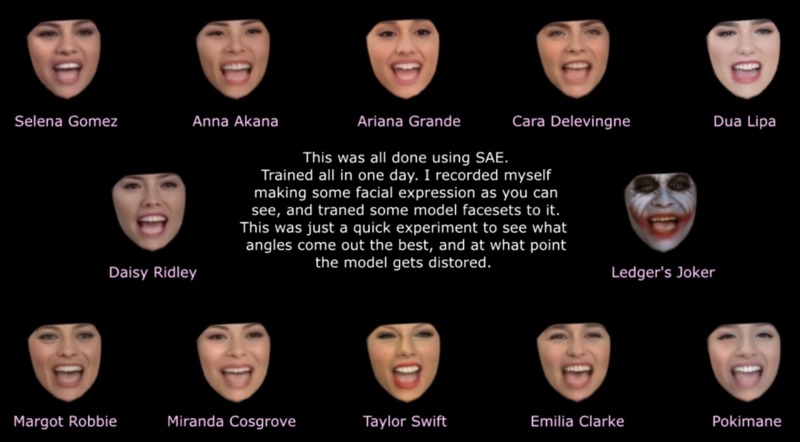

Remember how tech first took ownership over porn distributors & studios? Well next it will take over producing the content itself by incentivizing more tech-folks who can create Deepfakes and VR porn. On the popular site, Mr. Deepfakes, one can find a quick link to a myriad of forums on creating these videos, and then register in a Content Creator program incredibly similar to PornHub's. This points to an alarming trends promoting digital labor that actually removes performers entirely in the user-generated reproduction process.⁸ This user is particularly active on the site, making tons of scarily realistic Deepfakes and just now moving them into the VR realm. They post processing video likes this face-tracking nightmare of a bunch of celebrity women and even managed to put together a functional deepfake-vr combination program here. Funny enough, Mr. Deepfakes has links to other sites like Xnxx and offers special Brazzers discounts in their video-player ads, which seems to economically tie them to both Mindgeek as well as possible the ghostly Xnxx WGCZ holding company.

Many pornstars, like Tori Black, are being scanned into VR for future production.⁹ This allows for more content to be produced with their likeness, and can preserve a specific state of their body as they age for later works. Tori Black states that she doesn't care what her avatar does, because it's not "really" her, but I suspect many would not feel the same. With all of these tracking services cross-pollinating ads and content, and algorithmic data set training on similar services, and services like Instagram technically have the right to use your media however they see fit, for free--your face could end up as a VR likeness, doing god knows what, bringing profits to some random stranger, and all you could legally do would be to attempt a takedown-notice or slander suit.

What I'm getting at here is a point I hope has started to sink in more and more throughout this piece--porn regulation involves all of us, producers & consumer alike--even those of us who have never been to PornHub. We are all at risk in the future struggle for digital likeness rights, because every one of us has unprotected media on the internet. And virtual transness, blackness--really anything every commodified as exotic or "alien" will be especially ripe for the exploitation because of their "othered" and porno-economically disadvantaged statuses.

At a certain point down the line, we may even find ourselves being served up dynamically created pornography made to fit our interests--it could even accidentally be of our own bodies. It will be able to pull in endless combinations of the things we’re "into" whenever needed to keep our interests going in a TikTok-esque endless-scrolling dopamine abyss of a platform.¹⁰ Porn is an entity closer to TikTok than say Youtube or Spotify in the sense that it is completely disposable to most people and damn near impossible to revisit videos (because most don’t go around favoriting them like I do, they’re in private browsing). So if you wanted to keep the lowest cost and create the most possible content, you’d find ways to just generate infinite combinations and serve them to targeted consumers. At that point, humans are barely involved in the majority of porn.

Yes maybe this sounds too dystopic, and people want a human element, to have a stranger "give" their private self to you, etc. but really think about just how performative and non-organic so much of porn is—it’s not that crazy to imagine replicating it. And so you might not even be able to truly tell if it's a real person or not. It seems to me that amateur porn that is intensely human, and intensely hard to replicate, would become the alternative, and big-studio porn would fade out as generative porn rose. It will be harder to replicate that intimacy of the home “sex tape” or the “high-cinema-arthouse-project” porn whereas the classic “porn look” (which I barely need to explain, you can tell from the angles and dialogue and stuff) will be easily replicable algorithmically.

Right now we’re battling to remain sexually free from law, but we’re not paying attention to the growing sexual control that companies hold on us digitally. They have vested economic interests in monitoring the bodies of consumers just as much as producers given the physical self's role in porn consumption, and that should worry us deeply.¹¹ I realize this all may seems like quite an extrapolation, but f you think I'm going too far, just think back to the last ad you saw pop up before a porn video, or tried to stream a movie online for free. Chances are, it was one of these 3d animated porn games I've shown below, which are only there because people actually click them--and I'll leave it at that.

- Fitzsimmons, C. (2019, October 27). Exclusive: An Investigation into Algorithmic Bias in Content Policing on Instagram. Salty.

- Gaskell, A. (2019, September 3). The Ghost Workers Powering The AI Economy. Forbes.

- Gilbert, B. (2019, December 7). There Might be Another Reason Instagram is Testing Hiding 'Likes': to Get You to Post More. Business Insider Singapore.

- Shieber, J. (2018, April 24). The Makers of the Virtual Influencer, Lil Miquela, Snag Real Money from Silicon Valley. Techcrunch.

- Mahdawi, A. (2019, May 21). Why Bella Hadid and Lil Miquela's Kiss is a Terrifying Glimpse of the Future. The Guardian.

- Jackson, L. M. (2018, May 4). Shudu Gram Is a White Man's Digital Projection of Real-Life Black Womanhood. The New Yorker.

- Warzel, C. (2019, July 17). Facebook and Google Trackers Are Showing Up on Porn Sites. The New York Times.

- Ratchford, S. (2016, January 7). Behind the Scenes of Tori Black's Virtual Reality Porn Debut. Vice.

- Tolentino, J. (2019, September 23). How TikTok Holds Our Attention. The New Yorker.

- Leazer, G., & Kielty, P. (2015, April 24). What Porn Says to Information Studies: The Affective Value of Documents, and the Body in Information Behavior. American Society for Information Science and Technology.