Methods

"Embed Dump" Database Download & Management

Alright, if you're here with no interest in tackling the entire database, and you want to instead just use that HubTraffic tool to get a less detailed CSV--skip this. But if you want to be able to look through every single video title, tag, view, etc. like I do, then this section is for you. I promise you that if you don't have computer science experience it is not as daunting as it seems.

After downloading and unzipping the massive twenty-something gig CSV file from the Webmasters page on PornHub (or any of their Network sites), you'll need to find a way to actually open it. You can try throwing it into Tablaeu or Open Refine, but it just isn't going to work--it's too big. So the best solution I found alongside Professor Gabriel Pizzorno was to put the CSV into an SQL database. Now when we import it a bit later, it's possible to import the entire thing at once, but as a person who worries about randomly letting their computer run out of battery and couldn't let it sit running for multiple days uninterrupted, I preferred to cut it into a few pieces by about a million lines to import in chunks using some pretty simple Terminal commands (PC users, I'm sorry I just don't know, you'll have to Stack Overflow it). Each import took hours.

The easiest free way to do create an SQL database from these CSVs is to download MAMP (not pro, just regular), and MySqlWorkbench. With these tools you can set up a local server with one click via MAMP, and then create an SQL database to run on said server via a few clicks in MySQLWorkbench. Then via some simply SQL queries, you can get the data you need in a matter of seconds with its ultra-fast searching.

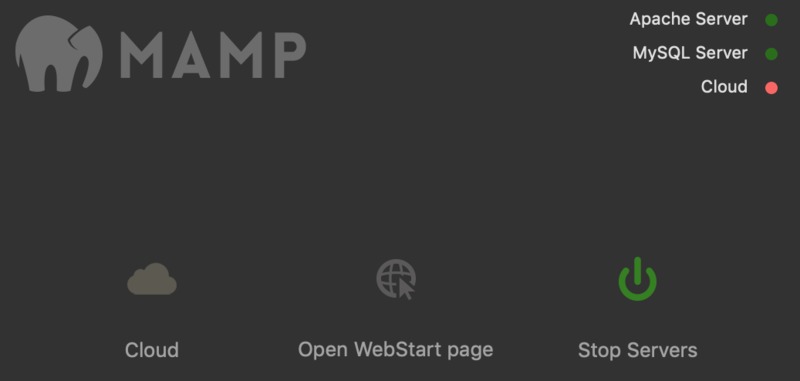

So pop open MAMP and click "Start Servers." That is literally it for MAMP, Apache and MySQL servers will have little green lights and Cloud will have red. Leave that running.

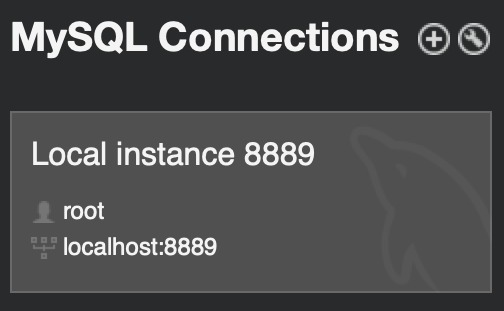

Open MySWLWorkbench and click on the box that says "Local Instance <your number>" with the number being set by whatever port MAMP is using (you can edit that in the MAMP preferences under "port"). If it asks you for a password, chances are it's "root"

Now that we're in and connected to the SQL database, we just need to right click on on the "sys" table in the "Schema" column displayed on the left of the window and hit "Table Data Import Wizard". Now you just import those CSV chunks/that giant CSV and wait. After many, many hours. You'll have a beautiful SQL database you can rapidly search through, plug straight into applications like Tablaeu, or use to export more focused and much smaller CSV's.

For example you can plug in the following query in the entry box in MySQLWorkbench and run it (via the lightning bolt button) to get only videos with the "Transgender" site-wide tag. Make sure to set "no limit" on the dropdown and then run the following query:

- SELECT * FROM [table] WHERE [ColumnName] LIKE '[string]%';

so for example in my case:

- SELECT * FROM XH.PH WHERE Site_Tags LIKE 'Transgender%';

This will give you about 30k videos matching the tag. But if you want to really dig into the larger swath of content that doesn't end up in the site-wide tag, you can run the following:

- SELECT * FROM XH.PH WHERE Custom_Tags LIKE 'shemale%' OR

Custom_Tags LIKE 'transgender%' OR

Custom_Tags LIKE 'trans%' OR

Custom_Tags LIKE 'tgirl%' OR

Custom_Tags LIKE 'tguy%' OR

Custom_Tags LIKE 'futa%' OR

Custom_Tags LIKE 'ladyboy%' OR

Custom_Tags LIKE 'tranny%' OR

Custom_Tags LIKE 'transsexual%' OR

Custom_Tags LIKE 'sissy%' OR

Custom_Tags LIKE 'crossdresser%' OR

Site_Tags LIKE 'Transgender%';

This gave me about 60k videos more generally related to trans content, which I then exported as both a new SQL Database to use in applications like Tableau, as well as in a CSV for quick reference and access to the raw text of the titles and tags.

That's the basics of dealing with this huge database. There are obviously other possible methods, but I found this to be the fastest way to get info from such large datasets. Plus, the ability to keep SQL tables that can then be merged into other services, etc. makes it a scalable system for future database inclusion.